Let me the first to admit that I am into minutiae, that is, I like details. But even I got bored during the day devoted to the subject of runway declarations during the Air Force Instrument Instructor Course. But, years later, when I first visited RAF Farnborough (EGUF), I started to appreciate the subject. Of course the airport is now operated by TAG Aviation as Farnborough London Airport (EGLF). But you still need a firm grasp on the subject.

— James Albright

Updated:

2023-01-17

Farnborough is one of the few airports where (a) you are given all the data, and (b) all the data makes sense. At most airports you get the runway length and that's it. At other airports you might get the more critical numbers, but they might not add up. The problem is there are factors we don't know about and you have to call the airport manager. Even then, it may be so complicated you just have to take their word for it. I've called a few airports where I got them to change their published numbers and others where they let me know that I don't know what I'm talking about. In any case, it helps to understand the terminology and the basic math. What follows comes from a number of U.S. and ICAO regulations.

1

Definitions

Accelerate Stop Distance Available (ASDA):

The runway plus stopway length declared available and suitable for the acceleration and deceleration of any airplane aborting takeoff.

Source: AC 150/5300-13B, p. 1-6

The length of the take-off run available plus the length of the stopway, if provided.

Source: ICAO Annex 14 Vol 1, Definitions

Aerodrome elevation:

The elevation of the highest point of the landing area.

Source: ICAO Annex 14 Vol 1, Definitions

Airplane Design Group (ADG):

A classification of aircraft based on wingspan and tail height.

Source: AC 150/5300-13B, p. 1-4

Where an airplane is in two categories, the most demanding category should be used. Category III covers a GV: tail height of 30 to less than 45 feet, wingspan of 79 to less than 118 feet.

Airport Elevation:

The highest point on an airport's usable runway expressed in feet above mean sea level.

Source: AC 150/5300-13B, p. 1-4

Airport Reference Point (ARP):

The approximate geometric center of all usable runways at the airport.

Source: AC 150/5300-13B, p. 1-4

Clearway:

Takeoff runway under control of the airport authorities which includes a plane extending from the end of the runway with an upward slope not exceeding 1.25% above which no obstacle or terrain protrudes.

Source: 14 CFR 1.1

A defined rectangular area beyond the end of a runway cleared or suitable for use in lieu of runway to satisfy takeoff distance requirements.

Source: AC 150/5300-13B, p. 1-5

A defined rectangular area on the ground or water under the control of the appropriate authority, selected or prepared as a suitable area over which an aeroplane may make a portion of its initial climb to a specified height.

Source: ICAO Annex 14 Vol 1, Definitions

Displaced Threshold:

A displaced threshold is a threshold located at a point on the runway other than the designated beginning of the runway. Displacement of a threshold reduces the length of the runway available for landings.

Source: AIM 2-3-3 §h.2.

A threshold not located at the extremity of a runway.

Source: ICAO Annex 14 Vol 1, Definitions

Landing Distance Available (LDA):

The runway length declared available and suitable for a landing airplane.

Source: AC 150/5300-13B, p. 1-6

The length of runway which is declared available and suitable for the ground run of an aeroplane landing.

Source: ICAO Annex 14 Vol 1, Definitions

Minimum Runway Width:

There really isn't one specified by regulation, but you can infer one thusly:

VMCG, the minimum control speed on the ground, is the calibrated airspeed during the takeoff run at which, when the critical engine is suddenly made inoperative, it is possible to maintain control of the airplane using the rudder control alone (without the use of nosewheel steering), as limited by 150 pounds of force, and the lateral control to the extent of keeping the wings level to enable the takeoff to be safely continued using normal piloting skill. In the determination of VMCG, assuming that the path of the airplane accelerating with all engines operating is along the centerline of the runway, its path from the point at which the critical engine is made inoperative to the point at which recovery to a direction parallel to the centerline is completed may not deviate more than 30 feet laterally from the centerline at any point. VMCG must be established with—

- The airplane in each takeoff configuration or, at the option of the applicant, in the most critical takeoff configuration;

- Maximum available takeoff power or thrust on the operating engines;

- The most unfavorable center of gravity;

- The airplane trimmed for takeoff; and

- The most unfavorable weight in the range of takeoff weights.

Source: 14 CFR 25.149(e)

So a G450, for example, has main gear which are 13'8" apart as measured from the strut center lines, so let's call that an even 14'. So the minimum runway width for a G450 is 30 + 30 + 14 = 74'.

Runway End Safety Area (RESA):

An area symmetrical about the extended runway centre line and adjacent to the end of the strip primarily intended to reduce the risk of damage to an aeroplane undershooting or overrunning the runway.

Source: ICAO Annex 14 Vol 1, Definitions

Runway Obstacle Free Area (ROFA)

ROFA is a clear area limited to equipment necessary for air and ground navigation, and provides wingtip protection in the event of an aircraft excursion from the runway.

Source: AC 150/5300-13B, p. 3-52

Runway Safety Area (RSA)

A defined area surrounding the runway consisting of a prepared surface suitable for reducing the risk of damage to aircraft in the event of an undershoot, overshoot, or excursion from the runway.

Source: AC 150/5300-13B, p. 1-11

Runway Separation Standards:

An aircraft in Airplane Design Group III in approach category C & D, requires 400 feet from the runway centerline to a taxiway centerline and 500 feet to an aircraft parking area. The distances increase 1 foot for each 100 feet above sea level.

Source: AC 150/5300-13

Without these clearances, some airports restrict GV activity. BCT, for example, does not allow a GV on the parallel taxiway unless the runway is clear or the GV to land or takeoff unless the taxiway is clear.

Stopway:

An area designated by the airport beyond the takeoff runway able to support the aircraft during an aborted takeoff.

Source: 14 CFR 1.1

A defined rectangular area on the ground at the end of take-off run available prepared as a suitable area in which an aircraft can be stopped in the case of an abandoned take off.

Source: ICAO Annex 14 Vol 1, Definitions

TODA (Takeoff Distance Available):

The TORA plus the length of any remaining clearway beyond the end of the TORA.

Source: AC 150/5300-13B, p. 1-6

The length of the take-off run available plus the length of the clearway, if provided.

Source: ICAO Annex 14 Vol 1, Definitions

TORA (Takeoff Run Available):

The runway length declared available and suitable for the ground run of an airplane taking off.

Source: AC 150/5300-13B, p. 1-6

The length of runway declared available and suitable for the ground run of an aeroplane taking off.

Source: ICAO Annex 14 Vol 1, Definitions

2

Calculated declared distances

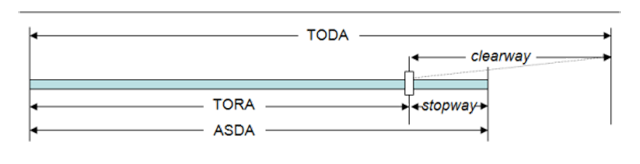

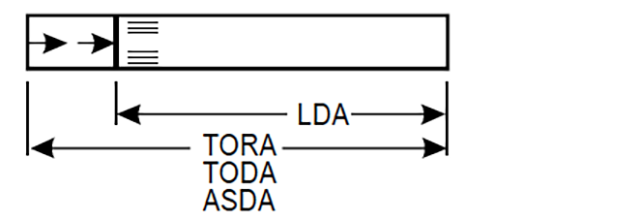

The declared distances to be calculated for each runway direction comprise: the take-off run available (TORA), take-off distance available (TODA), accelerate-stop distance available (ASDA), and landing distance available (LDA).

Source: ICAO Annex 14 Vol 1 Attachment A §3.1

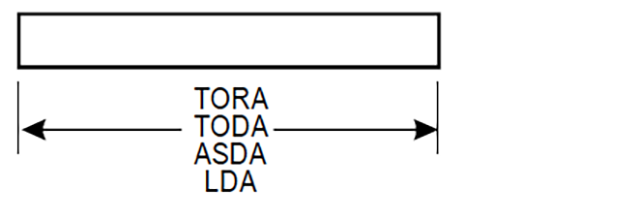

Where a runway is not provided with a stopway or clearway and the threshold is located at the extremity of the runway, the four declared distances should normally be equal to the length of the runway.

Source: ICAO Annex 14 Vol 1 Attachment A §3.2

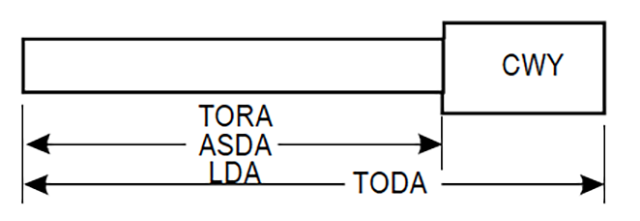

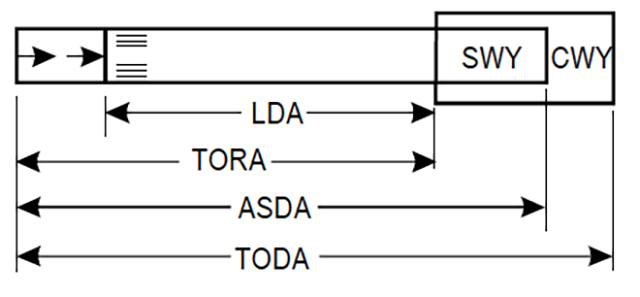

Where a runway is provided with a clearway (CWY), then the TODA will include the length of clearway.

Source: ICAO Annex 14 Vol 1 Attachment A §3.3

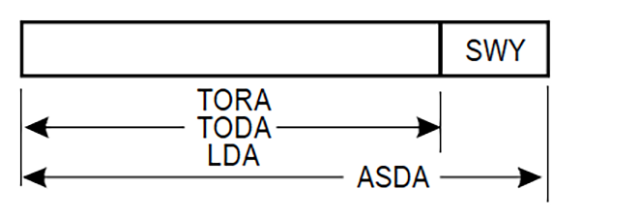

Where a runway is provided with a stopway (SWY), then the ASDA will include the length of stopway.

Source: ICAO Annex 14 Vol 1 Attachment A §3.4

Where a runway has a displaced threshold, then the LDA will be reduced by the distance the threshold is displaced, as shown in Figure A-1 (D). A displaced threshold affects only the LDA for approaches made to that threshold; all declared distances for operations in the reciprocal direction are unaffected.

Source: ICAO Annex 14 Vol 1 Attachment A §3.5

When airports start to mix various elements, declared distances can become difficult to figure out. One of the few places where it really matters to you is Farnborough. You will likely be loading enough fuel to make it home and understanding how much pavement you have at your disposal becomes critical. . .

3

Things get complicated

One of the more interesting and useful courses I've ever taken was the Air Force Instrument Instructor's School, which was at the same time uninteresting and not useful. Huh? We really got into the weeds and that level of detail was interesting for someone who likes to understand the why of things, but just too much detail for a pilot. For the same reason, the utility suffered. We learned, for example, how to determine the exact bank angle to fly on a tear drop entry to a procedure turn. I would show my students how 17.5 degrees of bank allowed me to roll out precisely on course. "Why not use a standard rate turn and roll out to intercept?" Ah, yeah, that would work too.

One of the things we did in that school was learn how to design a runway and extract all the TORAs, TODAs, and other numbers. That kind of knowledge leads to a bit of hubris in us instrument instructors which can only lead to disappointment when we find out we aren't as smart as we think we are. Given all the available runway distances, I used to think I could compute all the other numbers, as is the case with Farnborough, shown below. But that isn't always the case.

RSA and ROFA

Looking at the diagrams from ICAO Annex 14 Vol 1, shown above, you would think computing the ASDA is simply a matter of taking the runway length, adding the displaced threshold and stopway. Quite often that is the case. But there are exceptions. For example . . .

- Consideration for determining the ASDA:

- the start of takeoff,

- the RSA beyond the ASDA, and

- the ROFA beyond the ASDA.

Source: Source: , p. H-11

And it gets more complicated than that. RSA, for example, must be at least 600 feet and there are rules where that 600 feet must come from. There are similar complications for the other numbers. It is well beyond my scope (and ability) to cover how precisely each number is calculated. (AC 150/5300-13B is 434 pages long.) Rather, I just want to convey that the numbers don't always add up given the information you have. If your takeoff or approach numbers are critical, all you can do is take the given number at face value or call the airport manager.

4

Farnborough example

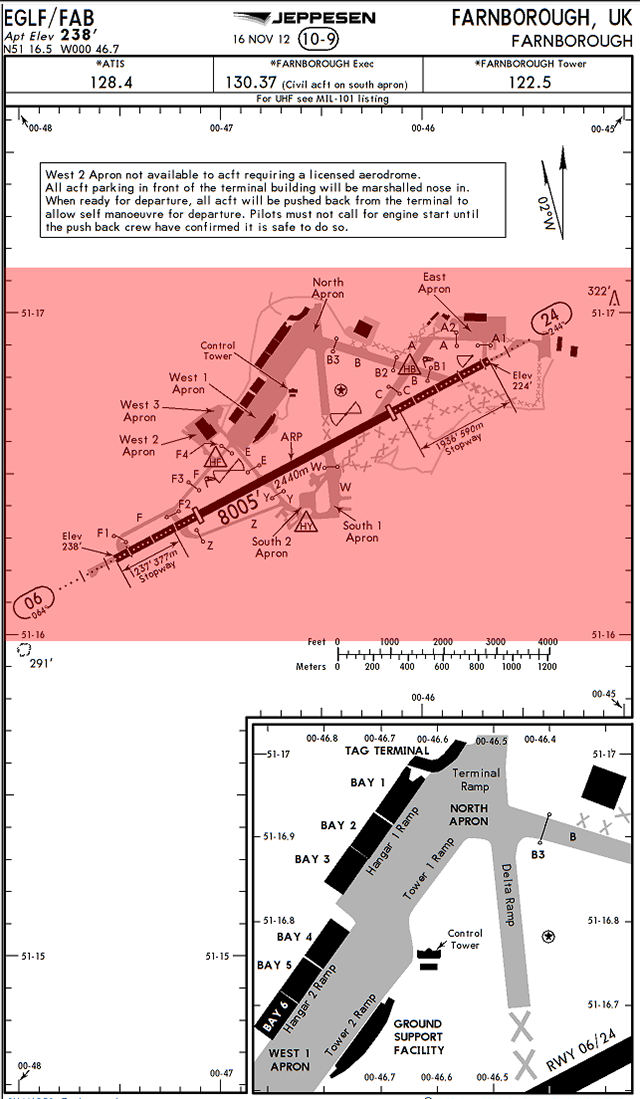

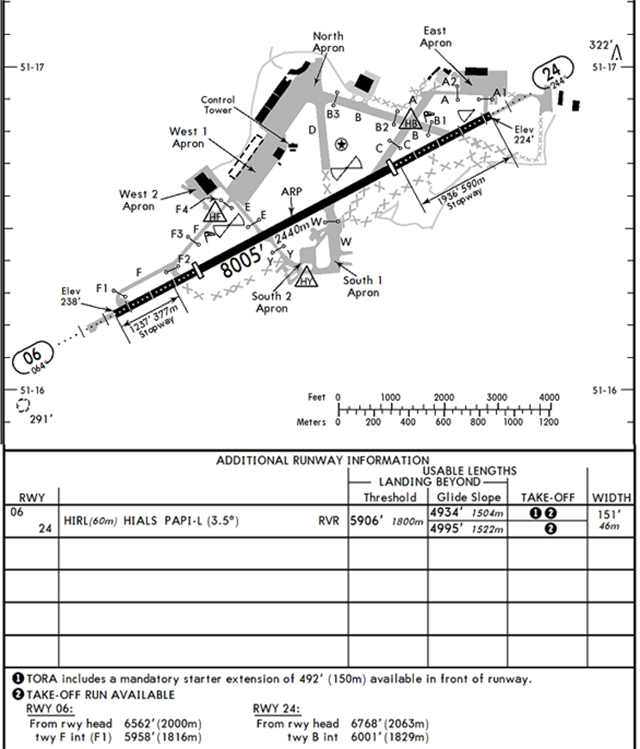

At first glance, the runway at Farnborough appears to be 8,005' and should be long enough to fuel the airplane up and head home. Right?

From the 10-9 page we see a distance of some sort: 8,005' as well as two areas labeled as stopways, 1237' and 1936'.

The first part of the runway in both directions is marked by white dots that do not appear in the Jeppesen airport directory chart legend and white boxes which are supposed to denote the displaced thresholds.

Turn the page over and things become even more complicated.

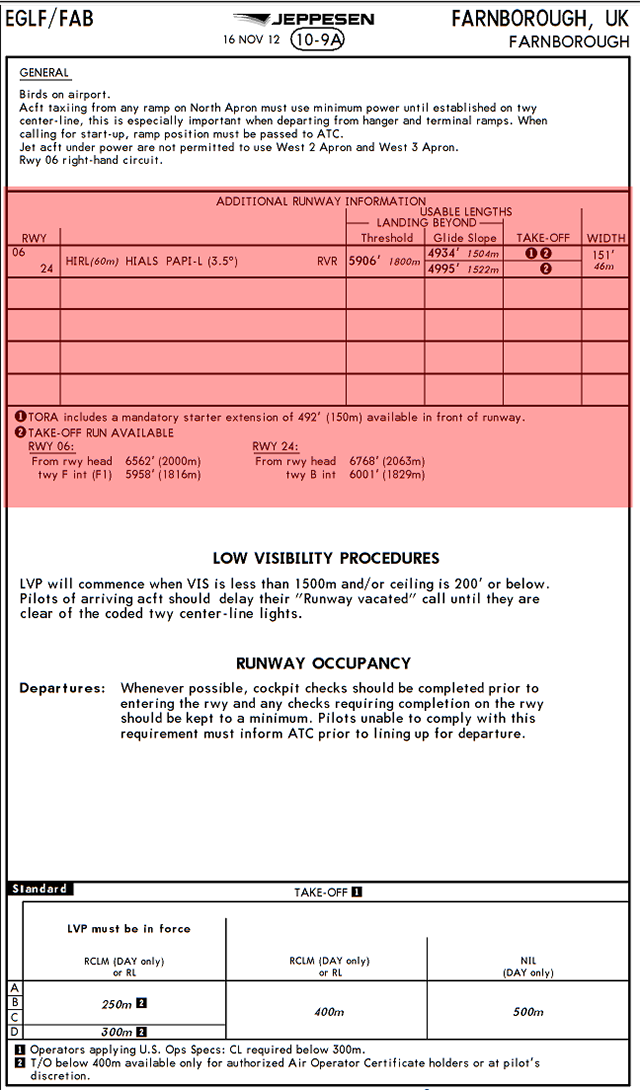

The 10-9A page says you have 5,906' landing distance for both runways but beyond the glide slope it gets shorter still by varying amounts. The TORA includes several notes.

- Runway 06 seems to add a starter extension of 492' but the TORA listed is only 6,562'.

- Runway 24 shows a TORA of 6,768'.

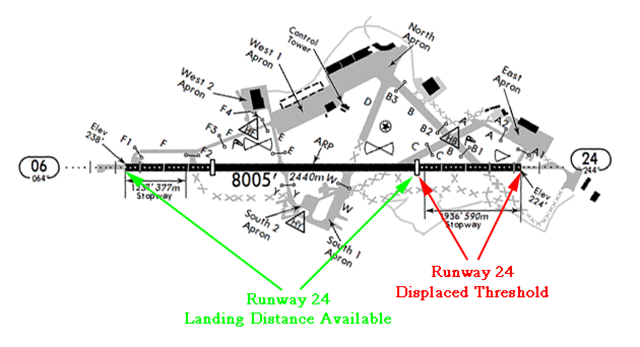

Landing

The 10-9A page says your landing distance beyond the threshold is 5,906' for both runways.

At first glance this doesn't seem to make sense, you cannot arrive at 5,906' by subtracting any of the other provided data from the runway's 8,005' length. If you look at the 10-9 page you will note the displaced threshold distance isn't given. But using the formulas provided by definitions in ICAO Annex 14, we see that the displaced thresholds are both equal to 8,005 minus 5,906 which equals 2,099'. Looking at the airfield diagram, this looks about right. So the provided 5,906' is actually your Landing Distance Available.

On Runway 06, for example, you can land any place beyond the displaced threshold and have the rest of the pavement to stop the airplane.

Video: EGLF Rwy 06.

On Runway 24, the distances are the same for the opposite direction.

Video: EGLF Rwy 24.

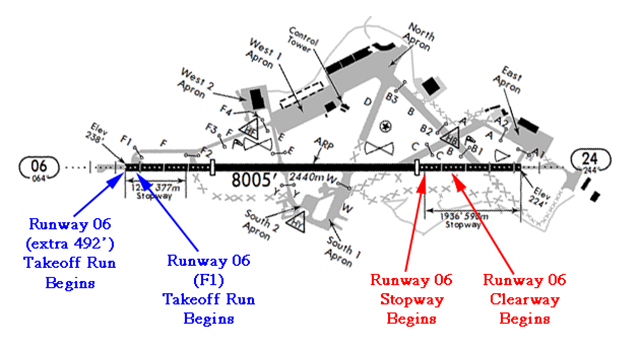

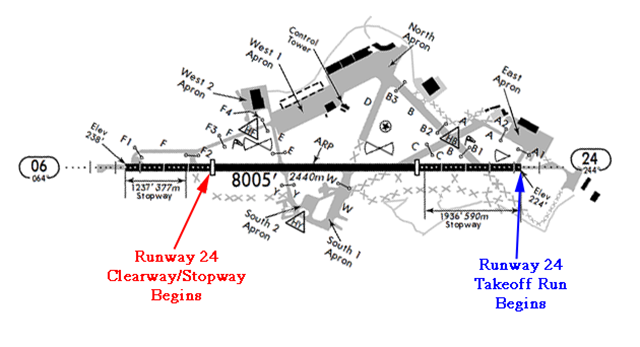

Takeoff

Once again the airport diagram and instructions muddy the waters because they do not spell out what you have in terms of a clearway and displaced threshold. You can, however, reason things through because they have labeled the stopways.

You can either back taxi to take advantage of the extra 492' or start your roll at taxiway F1. If you abort the takeoff you have everything left to stop. If you continue the takeoff, you need to be off the ground before you get to the clearway and you need to be at least 35' in the air when you get to the end of the clearway.

Runway 06 Clearway = 8,005' Runway Length - 6,562' TORA = 1,443'.

On Runway 24 you can start at the runway's end or at Taxiway B. If you abort the takeoff you have everything left to stop. If you continue the takeoff, you need to be off the ground before you get to the clearway/stopway and at least 35' in the air when you get to the end of the clearway. (The clearway and stopways are the same for this runway.)

Runway 24 Clearway = 8,005' Runway Length - 6,768' TORA = 1,237'.

Why?

All of this makes sense when you first see the airport nuzzled into the once quiet English countryside. They want airplanes at a reasonable altitude when they cross the airport boundaries. The approach to Runway 24 and departure from Runway 06 is more sensitive because one of the finest pubs in the area sits just a mile to the east:

You can see the runway from the pub.

5

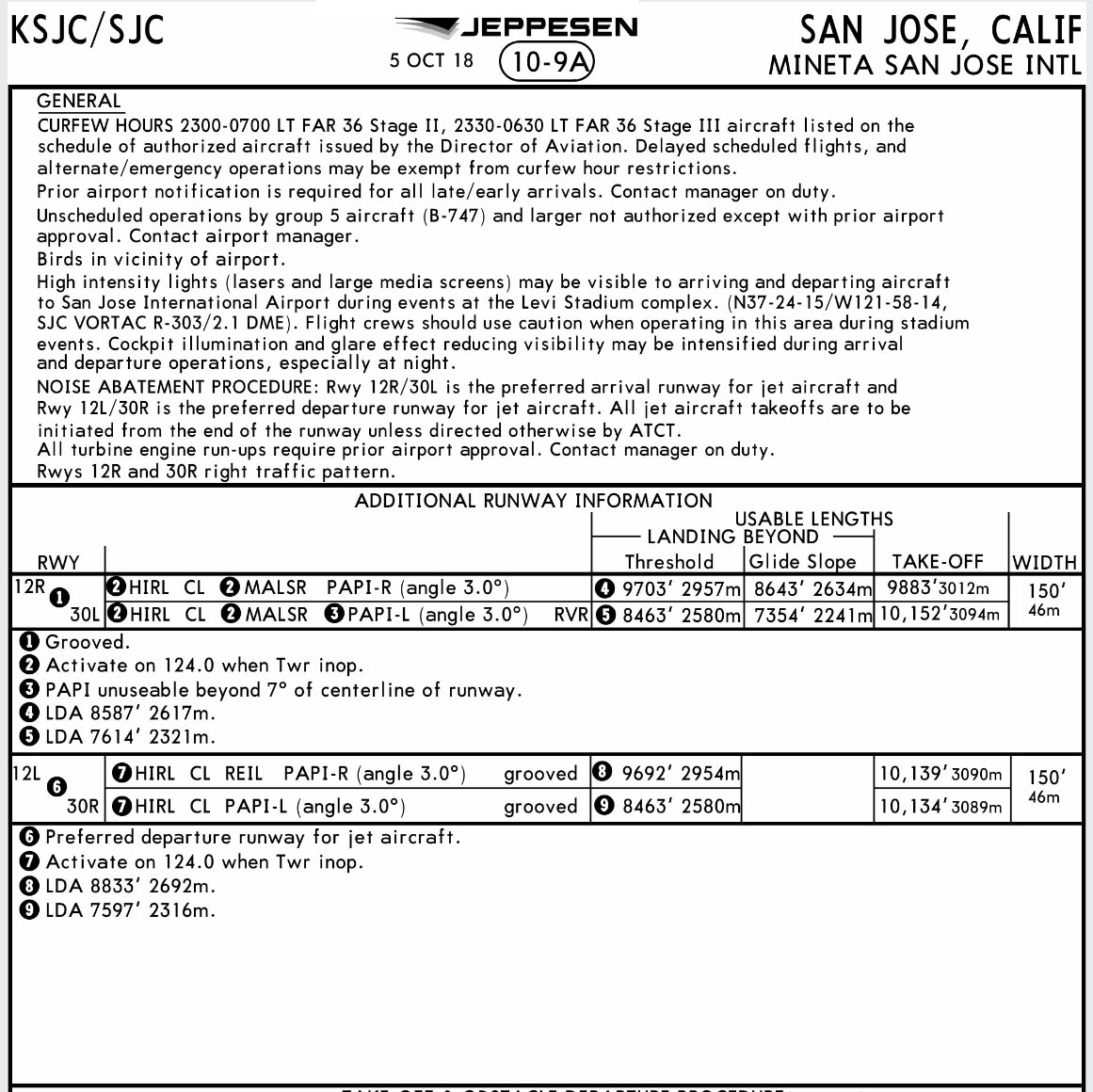

San Jose example

Dear James,

I tried to research this on my own with the help of your website and pulling down the general pub of Jeppesen pub but it went nowhere. Can you explain why the KSJC 10-9A lists displaced threshold distance (8463’) but note 5 states a LDA of 7614’? Our operation procedures has us insert the 8463’ in the HUD but it seems that may be erroneous. Any reference is kindly appreciated.

Signed, R. Fader

Fort Lee, New Jersey

Dear Mister Fader,

The problem is not everything gets labeled on the airfield diagram. So at KSJC Runway 30L/12R you have an 11,000’ runway with some displaced threshold on both ends and what is unclear is how much of the opposite end displaced threshold is considered available as a stopway. The end result for us pilots is that the distances don’t always add up. San Jose isn’t alone in this. I’ve called various airport managers up about this and have been surprised that quite often they don’t know. I called airport operations at San Jose and they were very helpful.

They sent the Airport Layout diagram. Everything adds up to within a foot now. The plan lists TODA = 11,000, ASDA = 10,152, LDA = 7,614, and Displaced Threshold = 2,537.

Doing the math, LDA = ADSA - DT = 10,152 - 2,537 = 7,615.

And, Landing Distance Beyond Threshold = TODA - DT = 11,000 - 2,537 = 8,463.

The difference between ASDA and TODA, in this case, is a stopway. It appears this runway has a 8463 - 7615 = 848’ stopway.

All of that brings us back to your original question: how much landing runway can you plan on? In this case, the shorter number is LDA which the ICAO says is “The length of runway which is declared available and suitable for the ground run of an aeroplane landing.” (Annex 14, Vol 1, Definitions) and the FAA says is “The runway length declared available and suitable for a landing airplane.” (AC 150/5300-13). This number does not include the stopway.

But what about the longer number? The landing distance beyond threshold does include the stopway. So what is a stopway? The ICAO says it is “A defined rectangular area on the ground at the end of take-off run available prepared as a suitable area in which an aircraft can be stopped in the case of an abandoned take off.” (Annex 14, Vol 1, Definitions) and the FAA says it is “An area designated by the airport beyond the takeoff runway able to support the aircraft during an aborted takeoff.” (14 CFR 1.1). So it is suitable or able, but not available. So it looks like the shorter number is the answer.

James

References

(Source material)

14 CFR 1, Title 14: Aeronautics and Space, Definitions and Abbreviations, Federal Aviation Administration, Department of Transportation

14 CFR 25, Title 14: Aeronautics and Space, Airworthiness Standards: Transport Category Airplanes, Federal Aviation Administration, Department of Transportation

Advisory Circular 150/5300-13B, Airport Design, 3/31/2022, U.S. Department of Transportation

ICAO Annex 14 - Aerodromes - Vol I - Aerodrome Design and Operations, International Standards and Recommended Practices, Annex 14 to the Convention on International Civil Aviation, Vol I, 6th edition July 2013